Photo Corners headlinesarchivemikepasini.com

![]()

A S C R A P B O O K O F S O L U T I O N S F O R T H E P H O T O G R A P H E R

![]()

Enhancing the enjoyment of taking pictures with news that matters, features that entertain and images that delight. Published frequently.

Test Drive: Photoshop Depth Blur

20 June 2023

Among Photoshop's beta neural filters we've been anxious to try since upgrading to an M2 MacBook Pro is Depth Blur. Still in beta but much improved over earlier iterations, it promises to manage selective focus after exposure.

We debuting a new feature with this review. Hover over an image to enlarge it. In this case 1.75x. It makes the dialogs easier to examine in the rollovers. We've enlarged the entire rollover in an update, making it easier to compare results.

This is one of those things Lidar technology (which uses lasers to measure distance) in smartphones uses with computational photography to enhance the bokeh in images taken with wide angle lenses, which inevitably have little of the stuff to begin with.

But with Photoshop, your camera doesn't need Lidar.

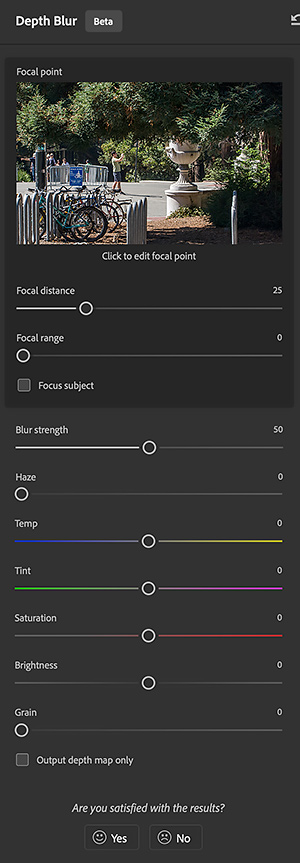

CONTROLS

We couldn't find any documentation to explain the interface or controls so we experimented over a few days. We may still have a detail or two wrong but here's what we learned.

A thumbnail of the image is displayed so you can click on a focal point. Alternately you can use a slider to set the Focal Distance. We liked picking a focal point in the image, though. Setting the point of sharpest focus just seemed more precise than guessing at the distance.

The Focal Range slider functions like an aperture might in expanding or contacting the depth of field.

The Blur Strength slider manages the amount of blur (a little or a lot) in the blurred areas, which vary by distance.

A slider for Haze lightens the blurred area as if fog had descended.

Those are the major controls. But below them are sliders for Color Temperature, Tint, Saturation, Brightness and Grain. These all appeared to apply globally.

There's also an option for outputting a depth map only. That displays the depth mask used by the filter, which can be instructive.

PHONE

Naturally we first tried it on a small phone image. We thought it would be a sort of assistive device for our iPhone 6 Plus (which can't perform that trick in camera).

Our iPhone has only one lens and it's a wide angle lens. So everything we shoot is in focus from the end of our arm to infinity. We've never seen any blur that wasn't camera shake in our iPhone photos.

We selected an image of the orchestra seating at War Memorial Opera House in San Francisco's Civic Center. The view was down the row across the theater with a few a patrons in their seats at various distances.

We set the focal point on various patrons with a click and then minimized the focal range. You can see the results (including the distance map) in the preview to the left of the controls in the screen shots below:

Note the small blue dot in the thumbnail. That's where we set focus.

We were able to focus as finely as on the nearest smartphone (as seen in the shot on the headlines page, although our Near example hereis focused on the back of the woman's head). Which was pretty impressive.

We think we were able to focus the woman reading her program in the Middle shot much more emphatically than we ever could in camera because he was quite distant from us.

CAMERA

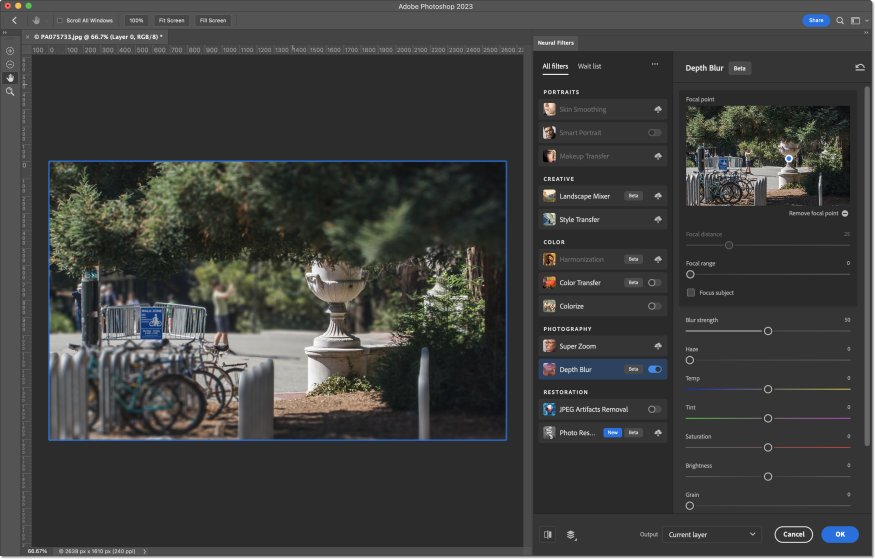

Next we tried the Depth Blur filter on a Micro Four Thirds image that was also in focus from the foreground to the background.

Again, we clicked on the point in the image's thumbnail where we wanted sharp focus. For the Near shot we clicked on the bikes while the Medium shot focus was on the large vase near Sather Gate in Berkeley and the Distance shot was the photographer looking through Sather Gate.

We were quite pleased with the results. Here are the screen shots without settings:

In this series we adjusted the Range and Strength sliders as well to vary the effect. We really liked how well that worked. You aren't boxed into a fixed depth of field.

BLURRED IMAGES

You might wonder what happens to an image that is already blurred.

The filter only blurs, it doesn't sharpen. So if you select an area of the image that is not sharp, it only gets less so.

PROCESSING

Earlier versions apparently sent the data to the cloud for processing.

We didn't see any indication of that with this version. The processing appeared to be local (we can't tell, frankly) and quite quick (that we could indeed tell) on the M2 MacBook Pro.

CONCLUSION

We found the controls to be robust enough to get the effect we had imagined. And what we had imagined was not subtle. So it's quite a powerful filter.

It does not add or subtract subject matter from the image but applies a targeted blur based on calculated distance, much as aperture does in camera.

But it isn't aperture control. It simulates aperture control. And the depth map shows what the simulation's assumptions about distance are.

After all, it is dealing with a two dimensional image, which likely does not contain that Lidar depth data. But that's also why AI is harnessed. A mere calculation would not suffice to determine depth across an image. But training the software to recognize scenes can mitigate that limitation.

And it does that very well.

It's not the sort of edit we'd routinely make (like fixing geometry or optics) but it doesn't take much imagination to envision cases where it could help reveal the subject of an image more effectively than pointing to it more intrusively.

In any case, it's just a selective focus tool you can use after capture. And since you can't always tell how aperture is affecting focus in camera, that can be very helpful.

Four corners for this delightful tool.